This article was published in Scientific American’s former blog network and reflects the views of the author, not necessarily those of Scientific American

In a recent Scientific American article entitled “Springtime for AI: The Rise of Deep Learning,” computer scientist Yoshua Bengio explains why complex neural networks are the key to true artificial intelligence as people have long envisioned it. It seems logical that the way to make computers as smart as humans is to program them to behave like human brains. However, given how little we know of how the brain functions, this task seems more than a little daunting. So how does deep learning work?

This visualization by Jen Christiansen explains the basic structure and function of neural networks.

Graphic by Jen Christiansen; PUNCHSTOCK (faces)

On supporting science journalism

If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

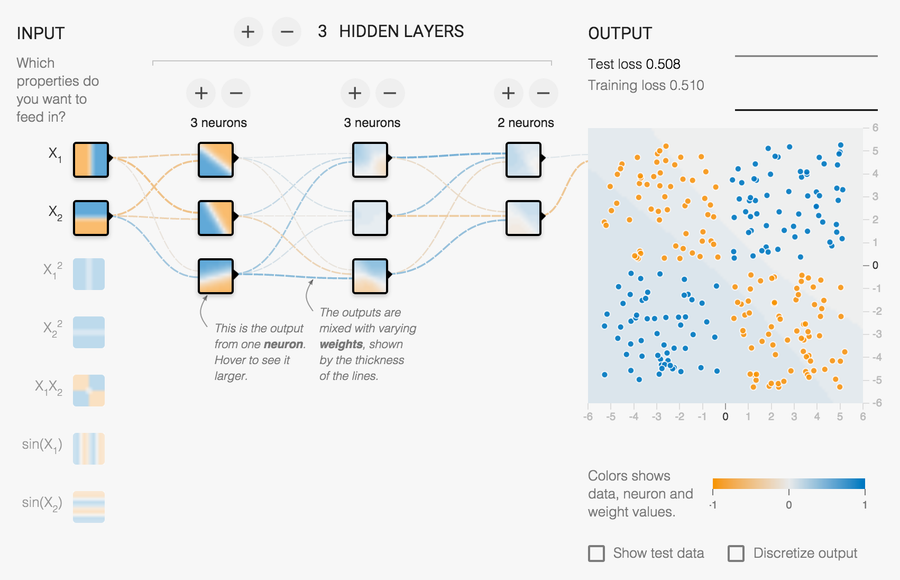

Evidently, these so-called “hidden layers” play a key role in breaking down visual components to decode the image as a whole. And we know there is an order to how the layers act: from input to output, each layer handles increasingly complex information. But beyond that, the hidden layers—as their name suggests—are shrouded in mystery.

As part of a recent collaborative project called Tensor Flow, Daniel Smilkov and Shan Carter created a neural network playground, which aims to demystify the hidden layers by allowing users to interact and experiment with them.

Visualization by Daniel Smilkov and Shan Carter

Click the image to launch the interactive

There is a lot going on in this visualization, and I was recently fortunate enough to hear Fernanda Viégas and Martin Wattenberg break some of it down in their keynote talk at OpenVisConf. (Fernanda and Martin were part of the team behind Tensor Flow, which is a much more complex, open-source tool for using neural networks in real-world applications.)

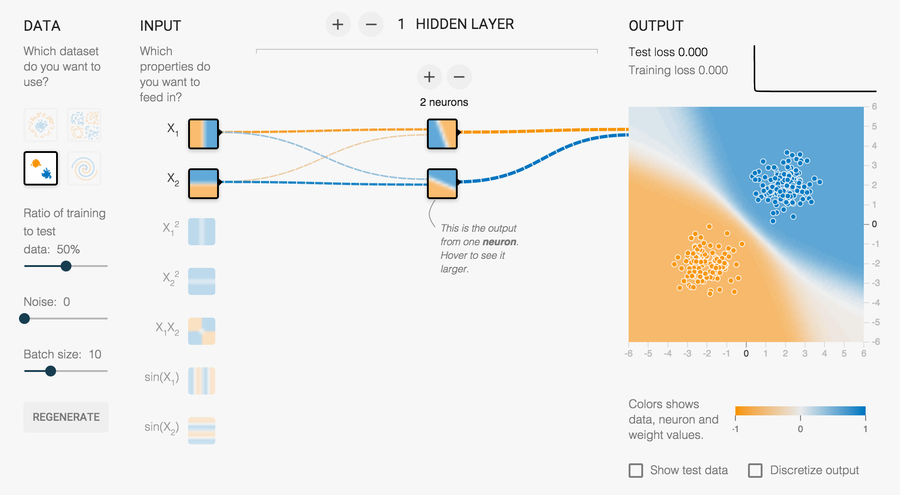

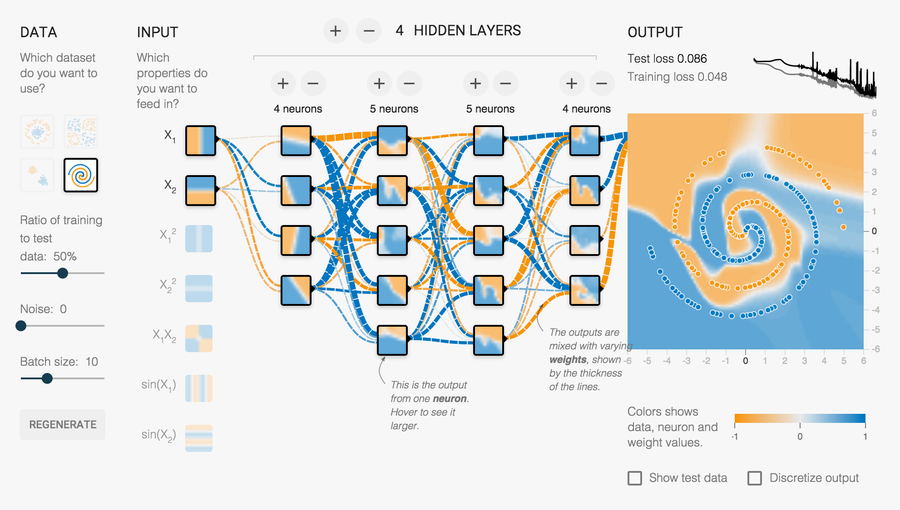

Rather than something as complicated as faces, the neural network playground uses blue and orange points scattered within a field to “teach” the machine how to find and echo patterns. The user can select different dot-arrangements of varying degrees of complexity, and manipulate the learning system by adding new hidden layers, as well as new neurons within each layer. Then, each time the user hits the “play” button, she can watch as the background color gradient shifts to approximate the arrangement of blue and orange dots. As the pattern becomes more complex, additional neurons and layers help the machine to complete the task more successfully.

The machine easily solves this straightforward arrangement of dots, using only one hidden layer with two neurons.

The machine struggles to decode this more complex spiral pattern.

Besides the neuron layers, the machine has other meaningful features, such as the connections among the neurons. The connections appear as either blue or orange lines, blue being positive—that is, the output for each neuron is the same as its content—and orange being negative—meaning the output is the opposite of each neuron’s values. Additionally, the thickness and opacity of the connection lines indicate the confidence of the prediction each neuron is making, much like the connections in our brains strengthen as we advance through a learning process.

Interestingly, as we get better at building neural networks for machines, we may end up revealing new information about how our own brains work. Visualizing and playing with the hidden layers seems like a great way to facilitate this process while also making the concept of deep learning accessible to a wider audience.