This article was published in Scientific American’s former blog network and reflects the views of the author, not necessarily those of Scientific American

The private space venture Blue Origin made some history on November 23rd 2015 with a successful launch, re-entry, and landing of its fully reusable, passenger-carrying rocket.

The project's single-stage launch vehicle lofted a protoype 6-human module to an altitude of approximately 100 kilometers (330,000 feet). The module re-entered the atmosphere and deployed parachutes for a dry touchdown, while the rocket performed a remarkable free-fall and powered landing to return to the very same launchpad it had left a short time earlier.

There are no bones to be picked about this, it's a very, very cool technical accomplishment. But it also serves to provide some perspective on the challenges of getting into space, getting into orbit, and getting beyond.

On supporting science journalism

If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

Let's break the problem down very simply, starting with a slide I sometimes use in teaching the rudiments of spaceflight.

.jpg?w=900)

A quick summary of what it takes to reach Earth escape velocity. G is th gravitational constant. M-sub-Earth is the mass of the Earth, m is the mass of a spacecraft, R-sub-Earth is the radius of the Earth.

The final equation represents the extreme, the whole hog. If you want to escape the Earth entirely, to get out to interplanetary space in one ballistic shot, you need to quickly reach the velocity ve - which is about 11.2 kilometers per second (40,300 km/hour).

Of course you don't have to do it like this. As long as your rocket can provide an upwards force greater than the gravitational force pulling against you, it's perfectly fine to slowly crawl your way out to deep space.

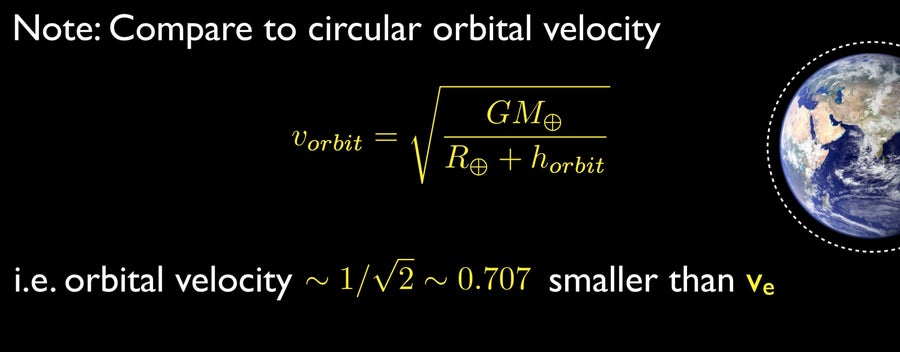

But how does this compare to, say, reaching a low Earth orbit? In terms of the required velocity to maintain a circular orbit at some height horbit above the planet, this next slide tells all:

A circular orbital velocity

This seems promising, you only need to reach about 70% of escape velocity in order to hold an orbit. If we ignore the energy required to gain an orbital altitude (to get above the drag of the atmosphere), the energy needed to just reach that orbital velocity is about 50% of that needed for complete escape.

Except, how does this stack up against reaching a sub-orbital point? In other words, doing what Blue Origin did, which is basically to shoot straight up and fall back down again.

I won't list the details here, but the calculation is simple; we can just ask what the difference is in gravitational potential energy between an object at some altitude above the surface of the Earth and at the surface of the Earth. For a 100 kilometer jaunt that change in energy is about 1.5% of the energy required to reach escape velocity, or about 3% of the energy required to establish an orbit.

In other words, to progress from making a 100 km sub-orbital 'drop' to getting into low-Earth orbit involves roughly a factor of 32 increase in energy budget. And that figure takes no account of how you manage to expend the energy, together with all the inefficiencies of propulsion and the impeding forces (like atmospheric friction) that are going to add to the recipe. A rocket to orbit must be a whole lot bigger and more powerful - just ask Space X.

Being stuck deep in a gravity well, with a cloak of atmosphere above our heads may have been a critical ingredient for our evolution and for the four billion years of biological evolution that came before, but it sure can suck when it comes to reaching space.