This article was published in Scientific American’s former blog network and reflects the views of the author, not necessarily those of Scientific American

In a previous post, “Jellyfish, Sexbots and the Solipsism Problem,” I discuss a major obstacle to research on consciousness. I know I am conscious, but I can’t be sure you or any other creature or thing is conscious. I call this the solipsism problem, but it is often called the other-minds problem. Scientists cannot solve the problem because they lack a “consciousness meter,” a means of objectively measuring consciousness. Philosopher Colin McGinn recently emailed me to say that the other-minds problem “could in principle be resolved experimentally.” Below McGinn explains his proposal. -- John Horgan

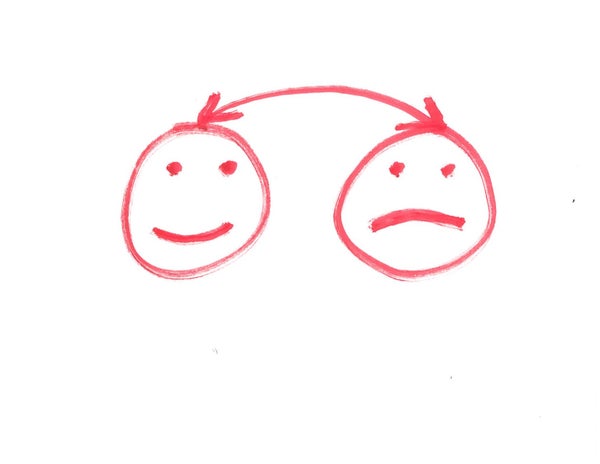

Suppose I want to know whether you have a mind, and if so whether it is like my mind. I am particularly concerned to know whether you have visual experiences like mine. So I arrange to have part of your brain transplanted into my brain (let’s suppose the technology is available): I have my visual cortex excised and yours inserted where mine used to be. If you are a zombie with no visual experience, then the brain splicing will leave me without visual experience. But if your brain is like mine and does generate conscious experience, then I will regain visual experience after the operation (and you will lose it). I can therefore conclude that you are (were) a conscious being. Moreover, I can resolve the question of whether you see the world as I do: I can determine whether, say, you have an inverted spectrum. If the splice leaves me seeing colors in the same way I saw them before, then I can conclude that you see (saw) colors as I do; but if I start seeing what I used to see as red as green, then I can conclude that you had an inverted spectrum. I wanted to know whether your brain has a property that I couldn’t determine that it has by ordinary observation, because of the inherent privacy of that property, so I simply join a part of your brain to my brain and see what happens experientially, thus determining whether or not your brain has the property in question. I use introspection to settle the question, aided by brain splicing. Thus I resolve the other minds problem once and for all. I perform an experiment the outcome of which is knowledge about which other beings have minds.

I could do the same with non-human brains. I just have bits of these brains spliced into mine and then I record the results. I could come to know what it is like to be a bat this way. I could settle the questions of reptile and insect consciousness. I could even in principle perform the splicing test on trees (with some pretty fancy equipment). All I need is a way to hook brains up with other brains. The case is just like having a brain transplant made of non-biological materials—such as a silicon-based replacement for my failing visual cortex. The replacement might duplicate the functional properties of my old nervous tissue, but it may also fail to generate the experiences I used to enjoy. Experimenters could try out different materials to see which, if any, produce consciousness (maybe only neural tissue just like the original does). My brain has the property of consciousness, which I can detect by means of introspection; so I just need to join another brain to mine in order to find out if it shares this property. There is nothing conceptually problematic about this, merely technically infeasible (at present). So I can in principle solve the problem of other minds: I can finally really know whether other minds exist. It will not be a matter of inference or conjecture anymore, but of introspective fact.

On supporting science journalism

If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

Someone might object that I can’t strictly infer the existence of other minds from a positive outcome in the splicing experiment, because it might be that the transferred tissue only becomes conscious when it leaves the other person’s head and enters mine. It was insentient in its original home, its possessor being a complete zombie, but inserting it into my wonderful head makes it light up with consciousness. All I strictly know is that it is conscious now, not that it was conscious before; so I can’t infer that its original owner must have been conscious. The point must be conceded--we have no strict logical deduction here. But surely the far more plausible position is that the brain tissue has the same causal powers in both locations—your head or mine. It is entirely reasonable to suppose that what I notice in myself already existed in you. Why should my head, which is just like yours, be able to inject consciousness into your brain tissue, while yours could not? No, if your brain tissue generates consciousness in me, then it also generated consciousness in you—you were conscious all along. Are we to suppose that if I give the tissue back to you it will suddenly cease to have the consciousness that it had when it was in my custody? We should rather accept this law: if a brain part is conscious in a particular subject, then it will be conscious in any other subject; and if it is not conscious in a particular subject, then it will not be conscious in any other subject (ceteris paribus). So we shouldn’t worry that the logical point undermines our ability in principle to solve the problem of other minds.

The problem of other minds is therefore in principle solvable. None of the other standard skeptical problems could be solved in this way—there is no point in splicing a physical object into my brain to see if I can tell whether it has the property of being real. That will just raise the old problem again: how can I infer from impressions of external objects that there are external objects? It seems to me that I have a table spliced into my brain, but that might be a dream or a hallucination. I have no possible mode of access to physical objects save through sense perception, but that leaves me vulnerable to skepticism. But I do have a possible mode of access to other minds that precludes skepticism, just as skepticism can be precluded for knowledge of my own mind—for I can determine the existence of other minds via brain splicing and introspection. This is the only philosophical problem I can think of that could in principle be resolved experimentally. As things stand, we must remain in doubt on the question, given our existing modes of access to other minds; but in principle the question could be resolved quite decisively. I find that rather comforting. –- Colin McGinn

Further Reading:

Jellyfish, Sexbots and the Solipsism Problem

Who Invented the Mind–Body Problem?

How Technology Can Make Valentine's Day Much, Much Better

CatCam Probes Philosophical Puzzle: What Is It Like to Be a Cat?

The Mind–Body Problem, Scientific Regress and "Woo"

Is Scientific Materialism "Almost Certainly False"?

Dispatch from the Desert of Consciousness Research, Part 1

Can Integrated Information Theory Explain Consciousness?

World's Smartest Physicist Thinks Science Can't Crack Consciousness

Christof Koch on Free Will, the Singularity and the Quest to Crack Consciousness