This article was published in Scientific American’s former blog network and reflects the views of the author, not necessarily those of Scientific American

It must be something in the air at the moment (gee, I wonder what it could be?) but recently I've found my thoughts wandering from exoplanets and life's origins to rather more parochial concerns. Actually, that's not entirely accurate, because a lot of those concerns are actually intimately linked with our understanding of the nature of planetary environments, biospheres, and the probability of life elsewhere.

The more we learn about the history of our own world, and the wider solar system, the more we see how both minor and major events in one era can cascade into radical changes in another. Contingency beats at the heart of many things; from planetary orbits to the present nature of life on Earth.

Frighteningly, we're no less vulnerable to any of these variable trajectories than any other species. Yet we do have, to some degree, a capacity to modify our choices and actions that has perhaps not been present in any other set of organisms in the 4-billion-year history of life on this cosmically small crumb of frozen minerals.

On supporting science journalism

If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

Our problem seems to be an inability to turn inaction into action, to stay focused and on task as a collective of hominids. It appears that we need constant reminding and nudging.

The 'Doomsday Clock' is a pretty good example of an effort to whisper those warnings in our ears. Since 1947 this imaginary clock, monitored by the Bulletin of the Atomic Scientists' Science and Security Board, has presented an estimate of the likelihood of global catastrophe at any given moment. It's hovered a few minutes from an imagined 'midnight' where the worse comes to pass. In 1953 it edged up to a chilling 2 minutes from doom, and after years at a more peaceful 5 to 17 minutes it has, in 2017, jumped back to a nasty 2.5 minutes from midnight.

But I wonder if we don't need more of these reminders, and perhaps a little more quantitative clout. After all, we live in the age of deep learning, Bayesian statistics, and simulated future climates and societies. Instead of a warning buzzer, can't we estimate the probability of what's to come? This could include climate change for sure, but also some deeper existential risk factors, from AI to asteroids, and the odds of our species getting off-world, or getting a whole lot smarter.

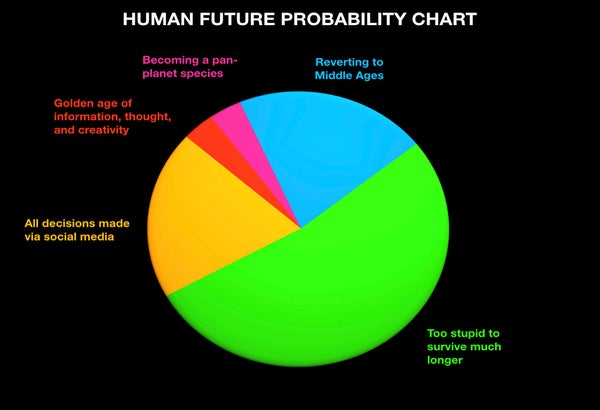

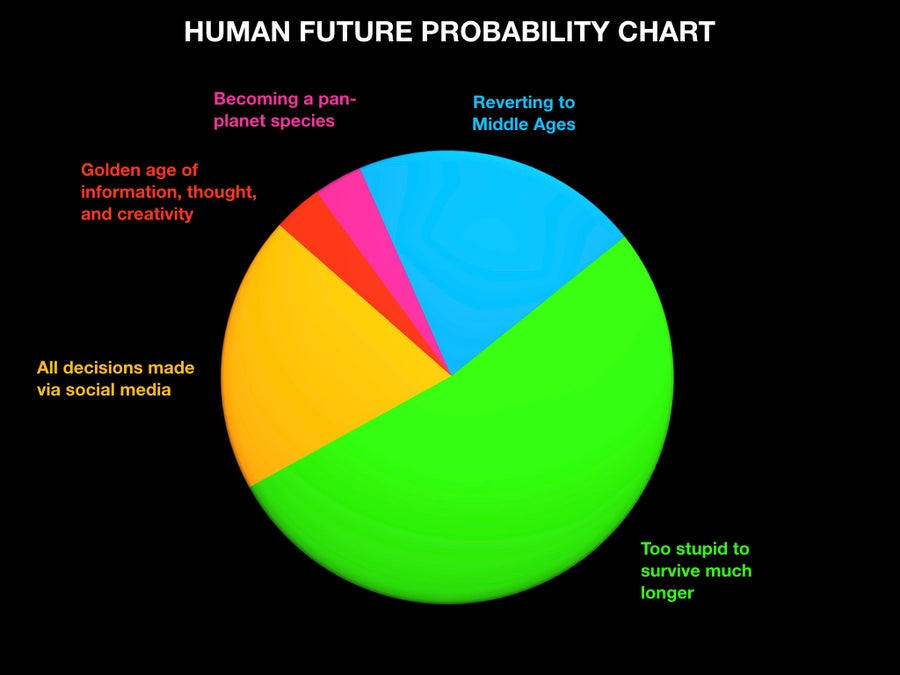

Right now I have nothing to offer except a cheeky example, laden with dark humor (the image above, and below here). But I think this could be done seriously (with genuine probabilities and projections), and perhaps it's time we looked at these odds for our future and used them to guide our choices instead of relying on either optimism or pessimism. I for one am getting my pen and paper out.

Care to add some wedges? Credit: C. Scharf