This article was published in Scientific American’s former blog network and reflects the views of the author, not necessarily those of Scientific American

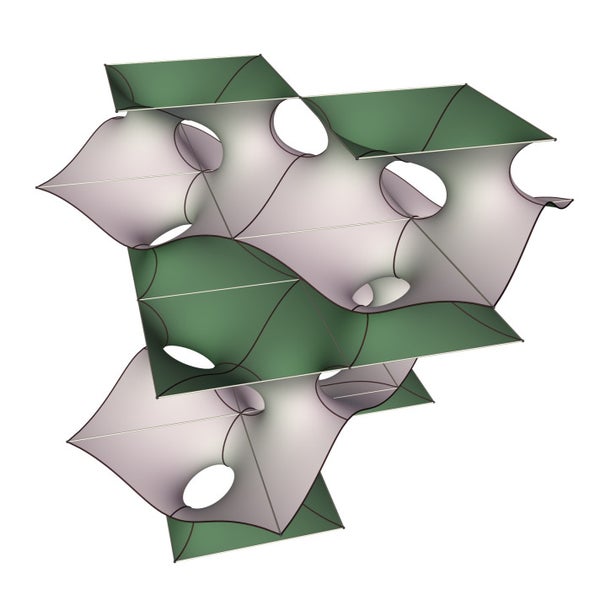

In my last column I recount how back in the 1990s two mathematicians named a geometric object after me, the “Horgan surface,” as revenge for “The Death of Proof.” The column gave me an excuse to revisit my controversial 1993 article, which argued that advances in computers, the growing complexity of mathematics and other trends were undermining the status of traditional proofs. As I wrote the column, it occurred to me that proofs generated by the Horgan surface contradict my death-of-proof thesis. I emailed a few experts to ask how they think my death-of-proof thesis has held up. Here are responses from computer scientist Scott Aaronson, mathematician-physicist Peter Woit and mathematics-software mogul Stephen Wolfram. (See Further Reading for links to my Q&As with them). I'll add more comments if/when they come in. –John Horgan

Scott Aaronson response (which he also just posted on his blog):

John, I like you so I hate to say it, but the last quarter century has not been kind to your thesis about “the death of proof”! Those mathematicians sending you the irate letters had a point: there’s been no fundamental change to mathematics that deserves such a dramatic title. Proof-based math remains quite healthy, with (e.g.) a solution to the Poincaré conjecture since your article came out, as well as to the Erdős discrepancy problem, the Kadison-Singer conjecture, Catalan’s conjecture, bounded gaps in primes, testing primality in deterministic polynomial time, etc. — just to pick a few examples from the tiny subset of areas that I know anything about.

On supporting science journalism

If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

There are evolutionary changes to mathematical practice, as there always have been. Since 2009, the website MathOverflow has let mathematicians query the global hive-mind about an obscure reference or a recalcitrant step in a proof, and get near-instant answers. Meanwhile “polymath” projects have, with moderate success, tried to harness blogs and other social media to make advances on long-standing open math problems using massive collaborations.

While humans remain in the driver’s seat, there are persistent efforts to increase the role of computers, with some notable successes. These include Thomas Hales’s 1998 computer-assisted proof of the Kepler Conjecture (about the densest possible way to pack oranges) — now fully machine-verified from start to finish, after the Annals of Mathematics refused to publish a mixture of traditional mathematics and computer code. It also includes William McCune’s 1996 solution to the Robbins Conjecture in algebra (the computer-generated proof was only half a page, but involved substitutions so strange that for 60 years no human had found them); and at the “opposite extreme,” the 2016 solution to the Pythagorean triples problem by Marijn Heule and collaborators, which weighed in at 200 terabytes (at that time, “the longest proof in the history of mathematics”).

It’s conceivable that someday, computers will replace humans at all aspects of mathematical research — but it’s also conceivable that, by the time they can do that, they’ll be able to replace humans at music and science journalism and everything else!

New notions of proof — including probabilistic, interactive, zero-knowledge, and even quantum proofs — have seen further development by theoretical computer scientists since 1993. So far, though, these new types of proof remain either entirely theoretical (as with quantum proofs), or else they’re used for cryptographic protocols but not for mathematical research. (For example, zero-knowledge proofs now play a major role in certain cryptocurrencies, such as Zcash.)

In many areas of math (including my own, theoretical computer science), proofs have continued to get longer and harder for any one person to absorb. This has led some to advocate a split approach, wherein human mathematicians would talk to each other only about the handwavy intuitions and high-level concepts, while the tedious verification of details would be left to computers. So far, though, the huge investment of time needed to write proofs in machine-checkable format — for almost no return in new insight — has prevented this approach’s wide adoption.

Yes, there are non-rigorous approaches to math, which continue to be widely used in physics and engineering and other fields, as they always have been. But none of these approaches have displaced proof as the gold standard whenever it’s available. If I had to speculate about why, I’d say: if you use non-rigorous approaches, then even if it’s clear to you under what conditions your results can be trusted, it’s probably much less clear to anybody else. Also, even if only one segment of a research community cares about rigor, whatever earlier work that segment builds on will need to be rigorous as well — thereby exerting constant pressure in that direction. Thus, the more collaborative a given research area becomes, the more important is rigor.

For my money, the elucidation of the foundations of mathematics a century ago, by Cantor, Frege, Peano, Hilbert, Russell, Zermelo, Gödel, Turing, and others, still stands as one of the greatest triumphs of human thought, up there with evolution or quantum mechanics or anything else. It’s true that the ideal set by these luminaries remains mostly aspirational. When mathematicians say that a theorem has been “proved,” they still mean, as they always have, something more like: “we’ve reached a social consensus that all the ideas are now in place for a strictly formal proof that could be verified by a machine … with the only task remaining being massive rote coding work that none of us has any intention of ever doing!” It’s also true that mathematicians, being human, are subject to the full panoply of foibles you might expect: claiming to have proved things they haven’t, squabbling over who proved what, accusing others of lack of rigor while hypocritically taking liberties themselves. But just like love and honesty remain fine ideals no matter how often they’re flouted, so too does mathematical rigor.

Peter Woit response:

What most strikes me thinking back to this debate from a quarter-century ago is how little has changed. There's a lot of money and attention now going to data science, machine learning, AI and such, but besides more of our students taking jobs in those areas, the effect on pure mathematics research has been minimal. One change is that the Internet has provided better access to high-quality mathematics research materials and discussions, with examples videos of talks, discussions on MathOverflow, and my colleague Johan deJong's Stacks Project. This kind of change has people communicating much as they have always done, just more efficiently.

At the same time, computers continue to only rarely have any role in the creation and checking of the proofs of mathematical theorems. The intense debate surrounding Mochizuki's claimed proof of the abc conjecture provides an interesting test case. The problems with understanding and checking the proof have involved the best minds in the field engaged in a difficult struggle for comprehension, with computerized proof checking playing no role at all. There is no evidence that computer software is any closer now than in 1993 to being to compete with Peter Scholze and other experts who have worked on analyzing Mochizuki's arguments. If there's a new positive development ahead in this story, it's going to be progress towards deeper understanding coming from a flesh and blood mathematician, not a tech industry server farm.

Stephen Wolfram response. When I contacted Wolfram, creator of Mathematica and other products, a publicist sent me a link to Wolfram's recent essay “Logic, Explainability and the Future of Understanding.” It is crammed with provocative assertions about the nature of mathematics, logic, proof, computation and knowledge in general. Wolfram claims, for starters, to have mapped out the space of all possible logical axioms, suggesting, he contends, that the axioms upon which we commonly rely are not somehow optimal or necessary but arbitrary. My takeaway is that the space of possible mathematics, while infinite, may be much more infinite than generally suspected. Wolfram also suggests that with the help of one of his inventions, Wolfram Language, computer proofs need not be black boxes, which generate a result but little understanding. Here is how he puts it:

At some level I think it’s a quirk of history that proofs are typically today presented for humans to understand, while programs are usually just thought of as things for computers to run… [O]ne of the main goals of my own efforts over the past several decades has been to change this—and to develop in the Wolfram Language a true “computational communication language” in which computational ideas can be communicated in a way that is readily understandable to both computers and humans.

But Wolfram warns that we will always bump up against the limits of understanding:

In mathematics we’re used to building our stack of knowledge so that each step is something we can understand. But experimental mathematics—as well as things like automated theorem proving—make it clear that there are places to go that won’t have this feature. Will we call this “mathematics”? I think we should. But it’s a different tradition from what we’ve mostly used for the past millennium. It’s one where we can still build abstractions, and we can still construct new levels of understanding. But somewhere underneath there will be all sorts of computational irreducibility that we’ll never really be able to bring into the realm of human understanding.

Karl Dahlke response: Dahlke is a mathematician with whom I have previously corresponded. See his website here.

There may be a parallel between the end of science, one of your favorite topics, and the end of formal proofs. Both could more accurately be rephrased as an inescapable law of diminishing returns. We don't expect a paradigm shift in any of these arenas, and although incremental progress is always possible, it is attained at an ever-increasing cost. The low hanging fruit of math and science was plucked during the Renaissance, and by 1960, the middle branches had been stripped as well. To reach the canopy, we bring computers to bear, as though a cherry-picker were ferrying us higher up the tree, but this technology assists us, it does not replace us. A human still has to pick the fruit by hand, and I don't expect that to change any time soon.

In particle physics, the supercollider that confirmed the Higgs boson may be the largest machine ever built, yet humans had to interpret the data; the collider did not discover anything on its own. In the same way, computers assist mathematicians in their efforts, but we have to construct the formal proofs ourselves. You mentioned David Hoffman's surfaces, where computers provided intuition, but mathematicians wrote the proofs.

The "Experimental Geometry Lab" is another example. Bill Goldman says in part, "the exercise of trying to explain math ... actually helped us discover new results, even though the computers themselves didn't really play much of a role in the discovery. The level of detail required to program a computer to produce geometric images can improve a researcher's understanding of the ideas in play."

Along with computers, collaboration is key, often on an international scale. Thousands of scientists and engineers worked together to confirm the Higgs Boson, and in the same fashion, hundreds of mathematicians collaborated to prove Fermat's Last Theorem. Andrew Wiles got the credit, and his contribution should not be underestimated, but he stood on the shoulders of giants. One human mind can barely contain, much less create, a modern proof, even with the assistance of computers. This Nova special lists all the mathematicians that made Wile's proof possible; jump to the end and watch it scroll by. http://www.pbs.org/wgbh/nova/proof/wiles.html

All this has ramifications for graduate students, as I discovered in 1990. My academic advisor told me the mean time to get a Ph.D. at Berkeley was 7.5 years. I was nonplussed. Was I willing to spend a tenth of my life obtaining a Ph.D., while my savings account dwindled, and my wife's biological clock ticked on? As it turns out, I was not; I got my masters degree, landed a job as a computer programmer, and started a family. The criterion for a Ph.D. is, and always has been, original research, with a subtext of "interesting" original research, as judged by experts in the field. However, new results are increasingly difficult to obtain.

This is particularly true in pure mathematics, but it may hold in other fields as well. At some point the criterion for a Ph.D. may have to shift - perhaps a contribution to ongoing research, even if it hasn't yielded any fruit yet, or perhaps a repackaging of existing material for clarity or for educational purposes, like a band covering a pre-existing song. I can't predict where this will lead, but few graduate students can commit 8 or 10 years of their life to a doctoral program, in search of something new under the sun, while they moonlight to remain solvent.

Further Reading:

The Horgan Surface and the Death of Proof

How William Thurston (RIP) Helped Bring About "The Death of Proof"

Who Discovered the Mandelbrot Set?

Was I Wrong about The End of Science?

Beauty Does Not Equal Truth, in Physics or Elsewhere

Bayes's Theorem: What's the Big Deal?

Mind-Body Problems (free online book)

See also Q&As with Scott Aaronson, Stephen Wolfram, Edward Witten and Peter Woit.