This article was published in Scientific American’s former blog network and reflects the views of the author, not necessarily those of Scientific American

I’m not sure when I first heard of Bayes’ theorem. But I only really started paying attention to it over the last decade, after a few of my wonkier students touted it as an almost magical guide for navigating through life.

My students’ rants confused me, as did explanations of the theorem on Wikipedia and elsewhere, which I found either too dumbed-down or too complicated. I conveniently decided that Bayes was a passing fad, not worth deeper investigation. But now Bayes fever has become too pervasive to ignore.

Bayesian statistics “are rippling through everything from physics to cancer research, ecology to psychology,” The New York Times reports. Physicists have proposed Bayesian interpretations of quantum mechanics and Bayesian defenses of string and multiverse theories. Philosophers assert that science as a whole can be viewed as a Bayesian process, and that Bayes can distinguish science from pseudoscience more precisely than falsification, the method popularized by Karl Popper.

On supporting science journalism

If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

Artificial-intelligence researchers, including the designers of Google’s self-driving cars, employ Bayesian software to help machines recognize patterns and make decisions. Bayesian programs, according to Sharon Bertsch McGrayne, author of a popular history of Bayes’ theorem, “sort spam from e-mail, assess medical and homeland security risks and decode DNA, among other things.” On the website Edge.org, physicist John Mather frets that Bayesian machines might be so intelligent that they make humans “obsolete.”

Cognitive scientists conjecture that our brains incorporate Bayesian algorithms as they perceive, deliberate, decide. In November, scientists and philosophers explored this possibility at a conference at New York University called “Is the Brain Bayesian?” (I discuss the meeting on Bloggingheads.tv and in this follow-up post, “Are Brains Bayesian?”)

Zealots insist that if more of us adopted conscious Bayesian reasoning (as opposed to the unconscious Bayesian processing our brains supposedly employ), the world would be a better place. In “An Intuitive Explanation of Bayes’ Theorem,” AI theorist Eliezer Yudkowsky (with whom I once discussed the Singularity on Bloggingheads.tv) acknowledges Bayesians’ cultish fervor:

“Why does a mathematical concept generate this strange enthusiasm in its students? What is the so-called Bayesian Revolution now sweeping through the sciences, which claims to subsume even the experimental method itself as a special case? What is the secret that the adherents of Bayes know? What is the light that they have seen? Soon you will know. Soon you will be one of us.” Yudkowsky is kidding. Or is he?

Given all this hoopla, I’ve tried to get to the bottom of Bayes, once and for all. Of the countless explanations on the web, ones I’ve found especially helpful include Yudkowsky’s essay, Wikipedia’s entry and shorter pieces by philosopher Curtis Brown and computers scientists Oscar Bonilla and Kalid Azad. In this post, I’ll try to explain—primarily for my own benefit—what Bayes is all about. I trust kind readers will, as usual, point out any errors.*

Named after its inventor, the 18th-century Presbyterian minister Thomas Bayes, Bayes’ theorem is a method for calculating the validity of beliefs (hypotheses, claims, propositions) based on the best available evidence (observations, data, information). Here’s the most dumbed-down description: Initial belief plus new evidence = new and improved belief.

Here’s a fuller version: The probability that a belief is true given new evidence equals the probability that the belief is true regardless of that evidence times the probability that the evidence is true given that the belief is true divided by the probability that the evidence is true regardless of whether the belief is true. Got that?

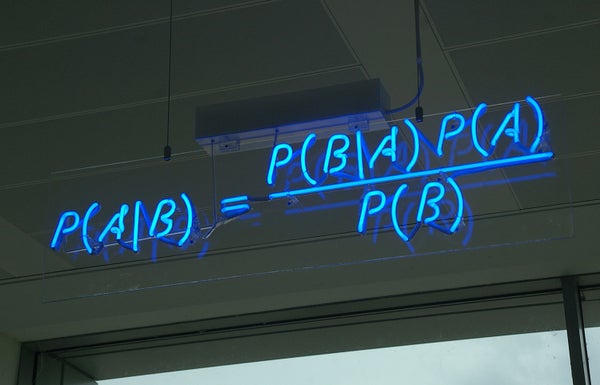

The basic mathematical formula takes this form: P(B|E) = P(B) X P(E|B) / P(E), with P standing for probability, B for belief and E for evidence. P(B) is the probability that B is true, and P(E) is the probability that E is true. P(B|E) means the probability of B if E is true, and P(E|B) is the probability of E if B is true.

Medical testing often serves to demonstrate the formula. Let’s say you get tested for a cancer estimated to occur in one percent of people your age. If the test is 100 percent reliable, you don’t need Bayes’ theorem to know what a positive test means, but let’s use the theorem anyway, just to see how it works.

To solve for P(B|E), you plug the data into the right side of Bayes’ equation. P(B), the probability that you have cancer prior to getting tested, is one percent, or .01. So is P(E), the probability that you will test positive. Because they are in the numerator and denominator, respectively, they cancel each other out, and you are left with P(B|E) = P(E|B) = 1. If you test positive, you definitely have cancer, and vice versa.

In the real world, tests are rarely if ever totally reliable. So let’s say your test is 99 percent reliable. That is, 99 out of 100 people who have cancer will test positive, and 99 out of 100 who are healthy will test negative. That’s still a terrific test. If your test is positive, how probable is it that you have cancer?

Now Bayes’ theorem displays its power. Most people assume the answer is 99 percent, or close to it. That’s how reliable the test is, right? But the correct answer, yielded by Bayes’ theorem, is only 50 percent.

Plug the data into the right side of Bayes’ equation to find out why. P(B) is still .01. P(E|B), the probability of testing positive if you have cancer, is now .99. So P(B) times P(E|B) equals .01 times .99, or .0099. This is the probability that you will get a true positive test, which shows you have cancer.

What about the denominator, P(E)? Here is where things get tricky. P(E) is the probability of testing positive whether or not you have cancer. In other words, it includes false positives as well as true positives.

To calculate the probability of a false positive, you multiply the rate of false positives, which is one percent, or .01, times the percentage of people who don’t have cancer, .99. The total comes to .0099. Yes, your terrific, 99-percent-accurate test yields as many false positives as true positives.

Let’s finish the calculation. To get P(E), add true and false positives for a total of .0198, which when divided into .0099 comes to .5. So once again, P(B|E), the probability that you have cancer if you test positive, is 50 percent.

If you get tested again, you can reduce your uncertainty enormously, because your probability of having cancer, P(B), is now 50 percent rather than one percent. If your second test also comes up positive, Bayes’ theorem tells you that your probability of having cancer is now 99 percent, or .99. As this example shows, iterating Bayes’ theorem can yield extremely precise information.

But if the reliability of your test is 90 percent, which is still pretty good, your chances of actually having cancer even if you test positive twice are still less than 50 percent. (Check my math with the handy calculator in this blog post.)

Most people, including physicians, have a hard time understanding these odds, which helps explain why we are overdiagnosed and overtreated for cancer and other disorders. This example suggests that the Bayesians are right: the world would indeed be a better place if more people—or at least more health-care consumers and providers--adopted Bayesian reasoning.

On the other hand, Bayes’ theorem is just a codification of common sense. As Yudkowsky writes toward the end of his tutorial: “By this point, Bayes' theorem may seem blatantly obvious or even tautological, rather than exciting and new. If so, this introduction has entirely succeeded in its purpose.”

Consider the cancer-testing case: Bayes’ theorem says your probability of having cancer if you test positive is the probability of a true positive test divided by the probability of all positive tests, false and true. In short, beware of false positives.

Here is my more general statement of that principle: The plausibility of your belief depends on the degree to which your belief--and only your belief--explains the evidence for it. The more alternative explanations there are for the evidence, the less plausible your belief is. That, to me, is the essence of Bayes’ theorem.

“Alternative explanations” can encompass many things. Your evidence might be erroneous, skewed by a malfunctioning instrument, faulty analysis, confirmation bias, even fraud. Your evidence might be sound but explicable by many beliefs, or hypotheses, other than yours.

In other words, there’s nothing magical about Bayes’ theorem. It boils down to the truism that your belief is only as valid as its evidence. If you have good evidence, Bayes’ theorem can yield good results. If your evidence is flimsy, Bayes’ theorem won’t be of much use. Garbage in, garbage out.

The potential for Bayes abuse begins with P(B), your initial estimate of the probability of your belief, often called the “prior.” In the cancer-test example above, we were given a nice, precise prior of one percent, or .01, for the prevalence of cancer. In the real world, experts disagree over how to diagnose and count cancers. Your prior will often consist of a range of probabilities rather than a single number.

In many cases, estimating the prior is just guesswork, allowing subjective factors to creep into your calculations. You might be guessing the probability of something that--unlike cancer—does not even exist, such as strings, multiverses, inflation or God. You might then cite dubious evidence to support your dubious belief. In this way, Bayes’ theorem can promote pseudoscience and superstition as well as reason.

Embedded in Bayes’ theorem is a moral message: If you aren’t scrupulous in seeking alternative explanations for your evidence, the evidence will just confirm what you already believe. Scientists often fail to heed this dictum, which helps explains why so many scientific claims turn out to be erroneous. Bayesians claim that their methods can help scientists overcome confirmation bias and produce more reliable results, but I have my doubts.

And as I mentioned above, some string and multiverse enthusiasts are embracing Bayesian analysis. Why? Because the enthusiasts are tired of hearing that string and multiverse theories are unfalsifiable and hence unscientific, and Bayes’ theorem allows them to present the theories in a more favorable light. In this case, Bayes’ theorem, far from counteracting confirmation bias, enables it.

As science writer Faye Flam put it recently in The New York Times, Bayesian statistics “can’t save us from bad science.” Bayes’ theorem is an all-purpose tool that can serve any cause. The prominent Bayesian statistician Donald Rubin of Harvard has served as a consultant for tobacco companies facing lawsuits for damages from smoking.

I’m nonetheless fascinated by Bayes’ theorem. It reminds me of the theory of evolution, another idea that seems tautologically simple or dauntingly deep, depending on how you view it, and that has inspired abundant nonsense as well as profound insights.

Maybe it’s because my brain is Bayesian, but I’ve begun detecting allusions to Bayes everywhere. While plowing through Edgar Allen Poe’s Complete Works on my Kindle recently, I came across this sentence in The Narrative of Arthur Gordon Pym of Nantucket: “In no affairs of mere prejudice, pro or con, do we deduce inferences with entire certainty, even from the most simple data.”

Keep Poe’s caveat in mind before jumping on the Bayes-wagon.

*My friends Greg, Gary and Chris scanned this post before I published it, so they should be blamed for any errors.

Postscript: Andrew Gelman, a Bayesian statistician at Columbia, to whose blog I link above (in the remark on Donald Rubin), sent me this solicited comment: “I work on social and environmental science and policy, not on theoretical physics, so I can’t really comment one way or another on the use of Bayes to argue for string and multiverse theories! I actually don’t like the framing in which the outcome is the probability that a hypothesis is true. This works in some simple settings where the ‘hypotheses’ or possibilities are well defined, for example spell checking (see here: http://andrewgelman.com/2014/01/22/spell-checking-example/). But I don’t think it makes sense to think of the probability that some scientific hypothesis is true or false; see this paper: http://andrewgelman.com/2014/01/22/spell-checking-example/. In short, I think Bayesian methods are a great way to do inference within a model, but not in general a good way to assess the probability that a model or hypothesis is true (indeed, I think ‘the probability that a model or a hypothesis is true’ is generally a meaningless statement except as noted in certain narrow albeit important examples). I also noticed this paragraph of yours: ‘In many cases, estimating the prior is just guesswork, allowing subjective factors to creep into your calculations. You might be guessing the probability of something that--unlike cancer—does not even exist, such as strings, multiverses, inflation or God. You might then cite dubious evidence to support your dubious belief. In this way, Bayes’ theorem can promote pseudoscience and superstition as well as reason.’ I think this quote is somewhat misleading in that

all parts of a model are subjective guesswork. Or, to put it another way, all of a statistical model needs to be understood and evaluated. I object to the attitude that the data model is assumed correct while the prior distribution is suspect. Here’s something I wrote on the topic: http://andrewgelman.com/2015/01/27/perhaps-merely-accident-history-skeptics-subjectivists-alike-strain-gnat-prior-distribution-swallowing-camel-likelihood/.”

Further Reading:

Was I Wrong about The End of Science?

A Dig Through Old Files Reminds Me Why I’m So Critical of Science.